A New Benchmark for Robot Hands: Researchers Launch ManipulationNet

A global group of robotics researchers has introduced ManipulationNet, a new infrastructure designed to measure how well robots can manipulate objects in the real world. The effort aims to solve one of the most persistent problems in robotics: the lack of a common benchmark for dexterous manipulation.

The research team includes scientists from institutions such as Rice University, National Institute of Standards and Technology, Massachusetts Institute of Technology, University of California, Berkeley, Carnegie Mellon University, and ASTM International, among others. Their work introduces a shared testing platform where robots can be evaluated on standardized real-world tasks.

The Manipulation Problem

Robotic manipulation—the ability for machines to grasp, move, and interact with objects—is considered one of the central challenges of robotics. Although more than 4 million industrial robots operate globally, most perform highly repetitive tasks in tightly controlled environments.

Robots still struggle when objects are unpredictable, cluttered, or require delicate handling. Tasks that humans perform easily—such as inserting a cable, arranging objects, or assembling small parts—remain difficult for machines.

One reason progress has been slow is the lack of consistent evaluation methods. Robotics labs often test systems using different objects, environments, and performance metrics, making it difficult to compare results.

Fields such as computer vision and natural language processing experienced rapid breakthroughs once standardized benchmarks like ImageNet and GLUE were introduced. ManipulationNet is designed to play a similar role for robotics.

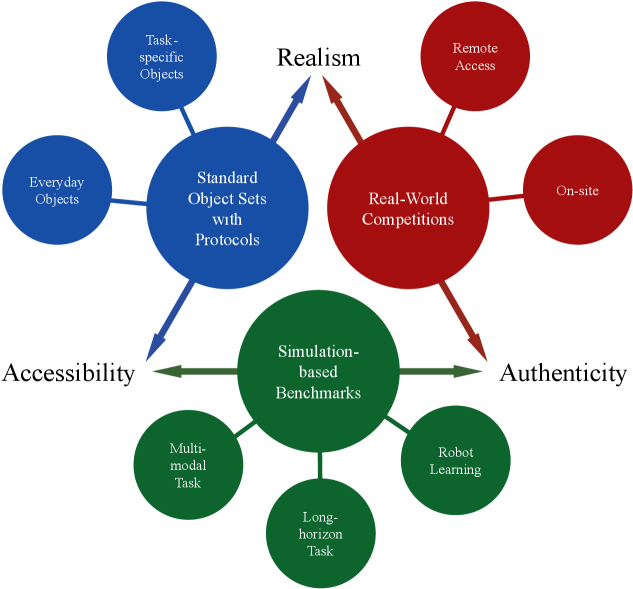

The “impossible trinity” of the existing robotic manipulation benchmarking effort. Prior initiatives can be broadly grouped into three categories: standardized object sets with task protocols, real-world competitions, and simulation-based benchmarks. Each category, however, addresses at most two of the required aspects in theory, and none successfully balances realism, accessibility, and authenticity to function as a large-scale, real-world manipulation benchmark.

A Global Benchmarking Network

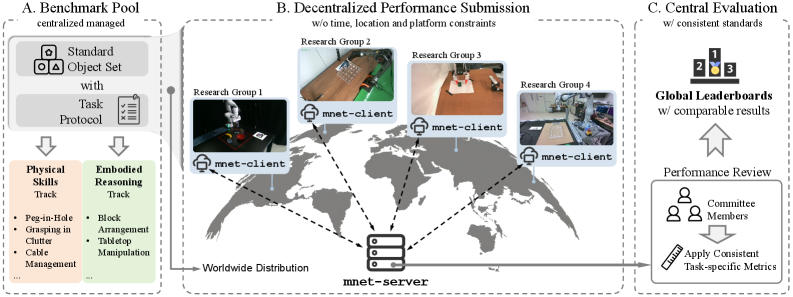

ManipulationNet provides a system where robotics teams around the world can perform identical tasks using standardized physical kits and submit their results to a central platform.

The infrastructure combines distributed testing with centralized verification. Researchers run experiments locally using their own robots but follow identical task protocols. Results are uploaded through a client software system that records execution data and video, which are then verified and scored by a central server.

This design aims to balance three goals that previous benchmarks struggled to achieve simultaneously:

Realism: tasks occur in the physical world

Accessibility: researchers can participate from anywhere

Authenticity: results are verified using consistent metrics

The project organizes benchmarks into two main tracks.

ManipulationNet has a clear structure. Standard object sets are centrally designed and distributed for consistent task setups. Registered research groups perform tasks with their customized robotic systems and report results through the mnet-client. Submitted data is centrally evaluated with standardized metrics and human validation, ensuring reliable and comparable performance assessments globally.

Physical Skills

The Physical Skills Track evaluates a robot’s ability to interact with objects under real-world conditions. Early tasks include:

Peg-in-hole assembly, which measures precision insertion with varying tolerances

Cable management, which tests manipulation of flexible objects

Grasping in clutter, where robots must retrieve objects from crowded scenes

These tasks are designed to isolate specific manipulation abilities while still reflecting real industrial challenges.

Embodied Reasoning

The second track focuses on cognitive abilities that guide physical actions.

In the Embodied Reasoning Track, robots must interpret instructions and visual cues to complete manipulation tasks. For example, a robot might be asked to rearrange objects based on natural language commands or replicate a structure using colored blocks.

This approach tests how well robots combine perception, reasoning, and motor control—capabilities that are essential for more general-purpose robotic systems.

Building a Long-Term Record of Robot Capability

Beyond individual experiments, the creators of ManipulationNet envision the platform as a long-term record of robotic manipulation progress.

As more research groups submit results, the system will track improvements over time and identify which capabilities are approaching real-world readiness. The infrastructure may also help guide future research priorities by highlighting persistent weaknesses in manipulation systems.

In the near term, the project aims to bring the robotics community together around a small set of shared benchmarks. Over time, the benchmark library is expected to expand to cover a wider range of manipulation challenges.

Ultimately, the goal is to help answer two key questions: what robots can reliably do today—and what they still struggle to accomplish in the physical world.

For a field that increasingly promises robots capable of operating in human environments, establishing a trusted measure of real-world capability may prove as important as the algorithms themselves.

Read the full paper here: https://arxiv.org/html/2603.04363v1